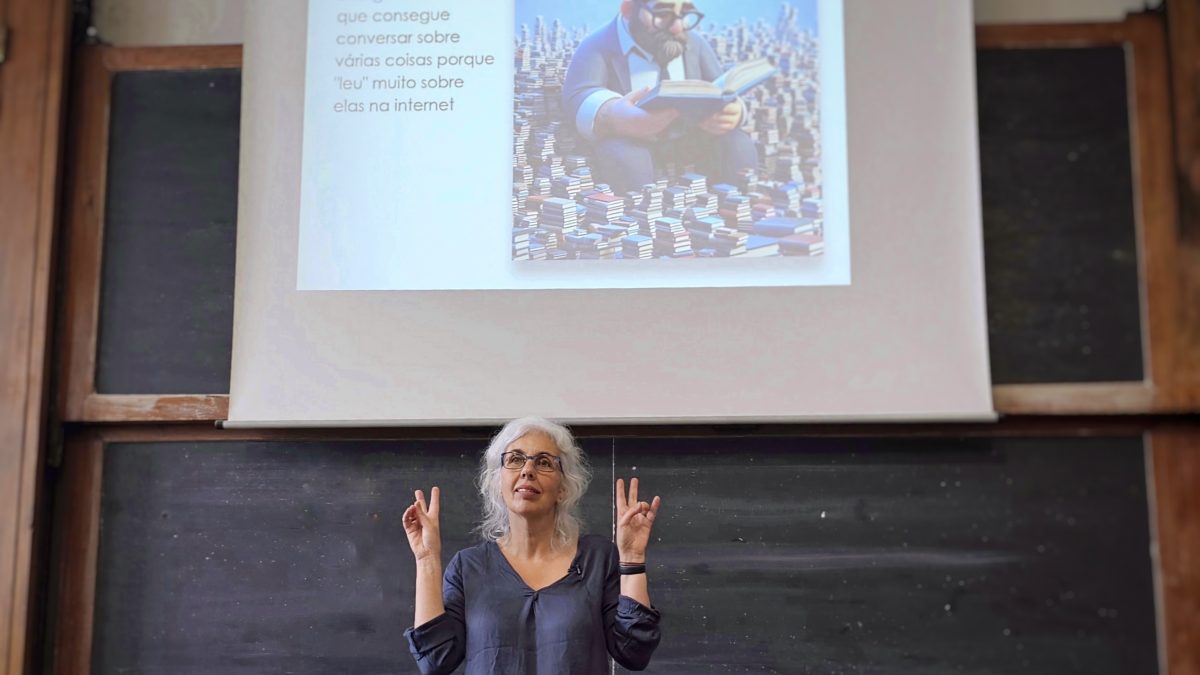

“GPT is wonderful! Use it without fear, – but with caution”, advises Luísa Coheur at Técnico Open Day

The auditorium was completely full, mostly of youngsters, who wanted to listen to Luísa Coheur’s talk at Técnico Open Day: “ChatGPT – potentials and risks.” And there was no disappointment, since a good share of the participants stayed beyond schedule in a very vivid conversation with the researcher at INESC-ID’s Human Language Technologies (HLT) lab and a teacher at Técnico.

The talk started with a retrospective on the origins of the now ubiquitous Large Language Models (LLM). “They were not born today, they are the result of many years of study in natural language processing, and also machine learning”, the researcher noted.

Starting on the sixties of past century, the field has grown ever since, with an impressive evolution after the first public presentation of the most famous LLM, GPT, in 2019. “The first versions generated text that was correct, but still a bit confusing”, Luísa told the audience. “But with GPT-3 it is madness!”

Assuming herself as a great enthusiast of the models, the researcher and teacher urged the students to incorporate this tool in their lives, including to fulfil their academic tasks. “I use it all the time, to prepare classes or to make presentations like this one”, Luísa revealed, giving the example of the illustrations, all created through instructions given to the model.

But if first half of the conference was devoted to the advantages of using LLM, the second was focused on the risks. “Never trust it completely, always check.” Voice and image manipulation, made up sentences, invented sources, are the most critical aspects of this technology. But there is only one way to fight it: is to know it well and be aware of its faults.

As the former President of USA, Franklin D. Roosevelt, famously said: “the only thing we have to fear is fear itself.”

Another presentation that also generated interest from the participants, was the one about the first University CubeSat, a satellite entirely conceived and developed in Portugal, in a collaborative team that includes INESC-ID researchers, Gonçalo Tavares and Moisés Piedade. The conference was delivered by João Paulo Monteiro, one of the researchers responsible the project, who will likely be at French Guiana in September, to assist the launch event.

(Image: © 2024 INESC-ID)