The expression of hate speech against Afro-descendant, Roma, and LGBTQ+ communities in YouTube comments

What’s in a word, and especially one mobilized for online hate speech (OHS)? A team of INESC-ID researchers has asked exactly that.

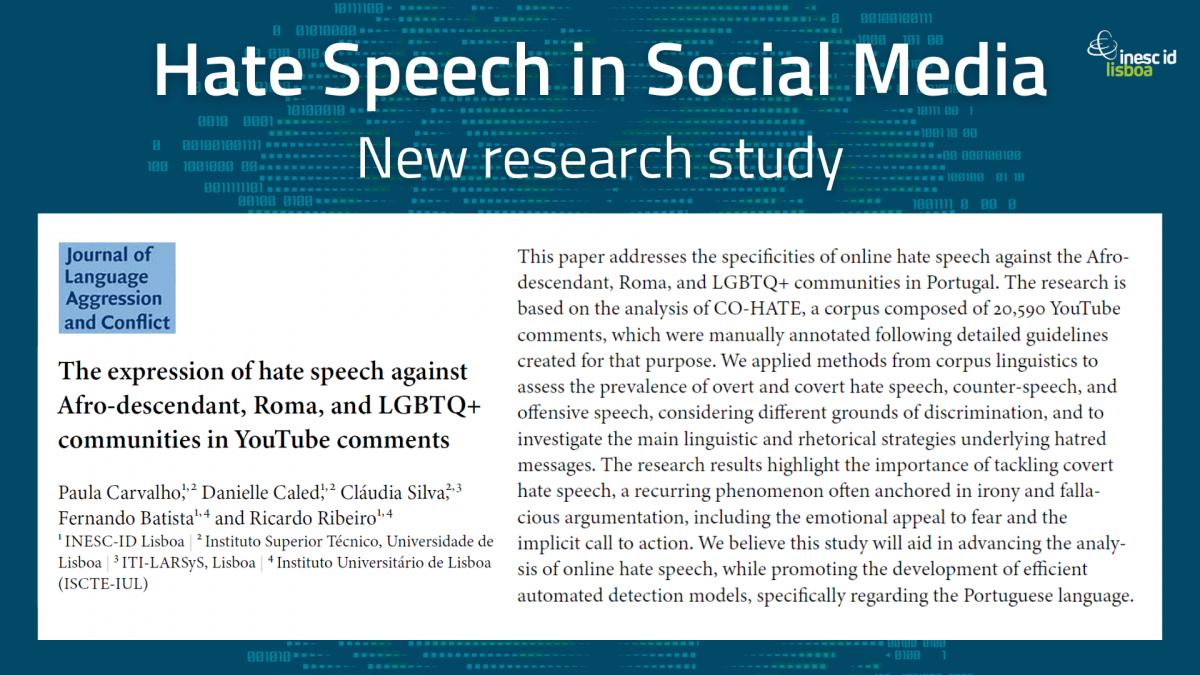

Authored by INESC-ID Information and Decision Support Systems (IDSS) researchers Paula Carvalho and Danielle Caled, and Human Language Technologies (HLT) researchers Fernando Batista and Ricardo Ribeiro (together with Cláudia Silva from ITI-LARSyS)*, The expression of hate speech against Afro-descendant, Roma, and LGBTQ+ communities in YouTube comments — published this month in the Journal of Language Aggression and Conflict — explores the prevalence of overt and covert hate speech, counter-speech and offensive speech in CO-HATE (Counter, Offensive and Hate speech), a corpus of Portuguese 20,590 YouTube comments posted by more than 8,000 different online users.

By asking two simple yet challenging questions — 1) how does OHS against the Afro-descendant, Roma, and LGBTQ+ communities materialize in the Portuguese social context? and 2) which are the main linguistic and rhetorical features underlying the expression of covert hate speech? — and creating a detailed database of written Portuguese (essential in studying and identifying online hate speech targeting Afro-descendant, Roma, and LGBTQ+ communities on social media), the team analyzed the specific characteristics of hateful comments towards these groups by combining quantitative and qualitative research methods based on corpus linguistics (which analyze large collections of texts to understand how language is used, uncovering patterns and relationships between words and structures, thus providing data-driven insights into the myriad ways language is used). They then measured agreement among annotators when identifying OHS and related topics.

By studying how people express hatred in their comments, the team found that comment writers often use specific language and persuasive techniques. They also discovered that hate speech is often hidden behind irony and misleading arguments, a kind of speech that tries to make people afraid and encourages them to take action.

This study offers valuable insights that can help detect online hate speech more effectively. It also deepens our understanding of how hate speech works online in Portugal, especially towards marginalized groups. Furthermore, the corpus created by Paula Carvalho et al. will be a valuable resource for those interested in developing methods to detect both obvious and hidden hate speech, as well as other related behaviors like counter-speech and offensive language, in Portuguese.

Future research venues might involve expanding this study to other social media platforms like Twitter and include more communities such as migrants and refugees. The team is also planning on involving more annotators, considering their social backgrounds, to better assess agreement between different communities.

This project follows a very successful research line at INESC-ID. Last year we had reported on the FCT-funded HATE COVID-19.PT project, coordinated by Paula Carvalho, and under which methods for semi-automatically putting together a large-scale Portuguese annotated corpus covering online hate speech were created.

*Paula Carvalho, Danielle Caled and Cláudia Silva are also affiliated with Instituto Superior Técnico, and Ricardo Ribeiro with Instituto Universitário de Lisboa (ISCTE-IUL).